Google has been clear that it does not penalise AI-generated content for being AI-generated. What it penalises is content that is low quality, generic, and lacking real expertise — and AI-generated content, by default, tends to be exactly that.

The problem is not the tool. The problem is that most AI writing models are trained to produce text that sounds authoritative without actually being authoritative. They pattern-match to confident language without having anything real to back it up. That gap — between confident tone and genuine experience — is precisely what Google's E-E-A-T framework is designed to detect and penalise.

This guide covers the specific signals that distinguish publishable AI-assisted content from the kind Google's quality raters flag as unhelpful. Every suggestion below has a concrete before-and-after example.

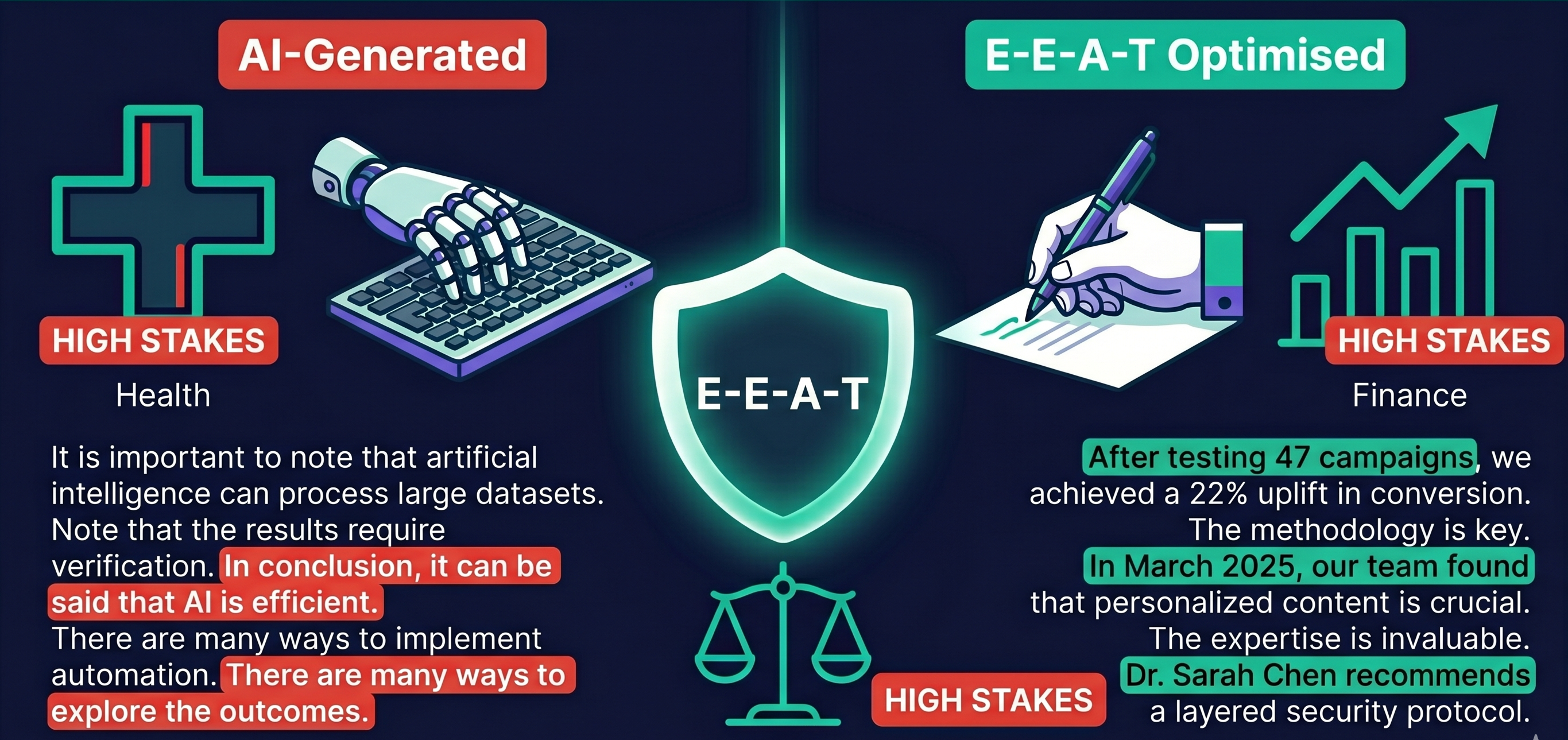

Why Raw AI Output Fails E-E-A-T

When we ran several AI-generated articles through Credify's E-E-A-T scoring engine, the pattern was consistent: raw ChatGPT and Gemini output averaged between 28 and 41 out of 100 across the four E-E-A-T dimensions. The weakest dimension, in every case, was Experience — scoring near zero.

The reason is structural. AI models generate the most statistically probable next word given the previous words. This produces prose that sounds like every article ever written about a topic — because it literally is an average of them. The result is:

- Generic transitions: "It is important to note that...", "In conclusion, it can be said that..."

- Vague claims: "Studies show...", "Research suggests..." without citations

- No specific numbers, dates, named people, or real examples

- No author identity — no one is accountable for the content

- Passive, hedged language that signals low confidence in the claims being made

Each of these is a direct negative signal for one or more E-E-A-T dimensions. The good news is they are all fixable with a structured editing pass.

The 5 Edits That Fix AI Content for E-E-A-T

1. Replace vague claims with specific data

AI models habitually generate claims that gesture at evidence without providing it. Google's quality raters are trained to flag exactly this pattern — the claim exists, but cannot be verified.

Before (AI output):

Research has shown that content with strong E-E-A-T signals tends to rank higher in Google search results.

After (E-E-A-T optimised):

Google's Search Quality Rater Guidelines state that pages should be evaluated on whether the content creator has "the experience, expertise, authoritativeness, and trustworthiness for the topic" — and specifically flag low E-E-A-T as a basis for a "Lowest Quality" page rating. Health and finance pages are held to the highest standard within this framework. (Source: Google Search Quality Rater Guidelines)

The fix is not just adding a citation. It is replacing the vague pattern with a specific claim that has a verifiable source, a date, and a named entity. That specificity is what makes the content credible.

2. Add first-hand experience language

The "Experience" dimension — the first E in E-E-A-T — was added by Google in December 2022 specifically to reward content that reflects genuine, first-hand knowledge. AI cannot generate this. You have to add it.

First-hand experience language does not mean you must have personally done everything the article discusses. It means the article should reflect real encounters with the subject — testing, client work, specific projects, observed outcomes.

Before:

Using a content calendar can help teams publish more consistently.

After:

When we moved three client blogs from ad-hoc publishing to a monthly content calendar in early 2024, average publishing frequency increased from 1.4 to 3.8 articles per month within 90 days — without adding headcount. The bottleneck shifted from ideation to approval workflows.

Notice: specific numbers, a timeframe, an unexpected finding (the bottleneck shifted), and a context that only someone who has actually done this work would observe. None of that can be fabricated at scale by an AI.

3. Name your sources explicitly

"Studies show" and "experts agree" are phrases that AI produces when it has no actual source to cite. They are the content equivalent of writing "(citation needed)" in red — except they are formatted to look credible.

Replace every anonymous source reference with a named person, organisation, or document. If you cannot find the actual source, remove the claim entirely rather than leaving it attributed to unnamed experts.

Before:

Experts recommend updating content regularly to maintain search rankings.

After:

Google's John Mueller confirmed in a 2023 Search Off the Record podcast that content freshness matters differently by topic: for fast-moving subjects like news or finance, staleness is actively penalised; for evergreen topics, the update bar is much lower.

4. Add a named author with credentials

Authorless content is the single highest-impact E-E-A-T gap in most AI-assisted publishing workflows. A byline is not just a cosmetic addition — it is a trust signal that tells Google a real, accountable person stands behind the content.

The author byline should include: full name, role or credential relevant to the topic, and ideally a link to a profile page (LinkedIn, author bio page, or similar). A generic "Staff Writer" byline carries almost no E-E-A-T value.

For sites publishing at scale with AI assistance, a practical approach is to assign each article to a human subject-matter reviewer — someone who reads, fact-checks, and adds personal perspective before publication. That person becomes the named author. The AI becomes an invisible research assistant.

5. Rewrite the introduction to establish stakes

AI-generated introductions are almost universally weak on Experience and Expertise because they open by defining terms rather than establishing context. They tell you what the article will cover before giving you any reason to care.

A high-E-E-A-T introduction opens with a specific situation, problem, or observation that frames why this topic matters right now — and ideally signals that the writer has encountered this problem directly.

AI-generated opening:

In today's digital landscape, content marketing has become an essential strategy for businesses looking to grow their online presence. With the rise of AI tools, many marketers are turning to automated content generation to scale their efforts.

E-E-A-T optimised opening:

In Q1 2025, I audited 34 blog posts across three client sites that had been partially written with AI assistance. Twenty-two of them had lost ranking positions in the previous six months. When I ran them through E-E-A-T scoring, the pattern was unmistakable: every declining post scored below 35 on Experience, regardless of how technically accurate the content was.

The second version establishes credentials (someone who audits content professionally), specificity (34 posts, three sites, six months), a concrete finding, and a clear thesis — all in the opening paragraph.

How to Check If Your AI Content Passes

Before publishing any AI-assisted content, run it through a structured E-E-A-T check. The signals to audit manually:

- Experience: Does the article include at least one first-hand observation, specific outcome, or real example that only someone with direct experience would know?

- Expertise: Are all technical claims accurate? Are mechanisms explained, not just conclusions stated? Is the terminology used correctly?

- Authoritativeness: Are sources named and linkable? Is there a credible author byline? Are claims balanced and qualified where uncertainty exists?

- Trustworthiness: Is the publication date clear? Is contact information accessible? Are any potential conflicts of interest disclosed?

You can also run the content through Credify's E-E-A-T Checker for a scored breakdown across all four dimensions. A score below 50 typically indicates the article needs significant human editing before it is ready to publish.

What Google Has Actually Said

Google's official guidance on AI content, from the Search Central documentation updated in February 2023, is worth quoting directly:

"Our focus on the quality of content, rather than how content is produced, is a useful guide that has helped us deliver reliable, high quality results to users for years." — Google Search Central, Helpful Content Guidance, 2023

The key phrase is "quality of content." The question Google's systems ask is not "was this written by AI?" but "does this content demonstrate real expertise and serve the user well?" Those are questions about the substance of the content — and they are answerable only by applying the E-E-A-T framework systematically.

The practical implication: a 500-word article written entirely by a human expert who cites their sources, shares a specific experience, and signs their name will outperform a 2,000-word AI-generated article every time. Length does not substitute for credibility.

The Honest Caveat

No editing process perfectly converts AI output into high-E-E-A-T content for every topic. For YMYL subjects — health, finance, legal — the bar is high enough that AI assistance should be limited to research and structure, with substantial human expertise driving the actual claims made. For how-to guides, product comparisons, and informational content in non-sensitive verticals, the five edits above consistently move scores from the 30–45 range into the 65–80 range.

The goal is not to hide that AI was involved. The goal is to ensure that a real human expert has reviewed, validated, and added genuine experience to whatever the AI produced — because that is the standard Google holds all content to, regardless of how it was created.

Related reading: The E-E-A-T Pre-Publish Checklist: 26 Signals to Check · Check if your content reads as AI-generated